While streaming, the Unraid server shows as uploading ~90Mb/s with spikes up to 120Mb/s. I even tried directly mounting the samba mount from unraid onto Kodi and it even buffers then. I have tried the Plex app for Android, I have also recently played around with Kodi and it’s various add ons for Plex (Plex for Kodi, Composite, and PlexKodiConnect) and the issue always seems to be present. So the issue is that when I am streaming one of the higher quality 4k remuxes (~80Mbps for 4k hdr + ~5Mbps for lossless audio) I am constantly running into buffering issues even though I am not doing any transcoding. I also have my main client as an Nvidia Shield TV Pro that is wired into one of the Orbi satellites.It is wired directly to the Orbi router that has wireless backhaul to the other 2 satellites. I have an Unraid server that hosts movies (mainly 4k remuxes) on x4 HGST HUH721212ALE600 drives using a Plex server primarily.AMD processors with integrated graphics, and AMD/Nvidia discrete GPUs can also be used for hardware acceleration.Not the usual thing to be on this forum, but hey you guys are a knowledgeable bunch and this has got me stumped. I don't know much about whats used in TVs, but I would assume it's a very low powered cpu that runs the UI, and a highly optimized video decoder (something like an iGPU) to play the content.Įdit: just changed a bit for clarity and wanted to add this link so you can see the difference in intel iGPU generations. It will have no problem playing 4k content, but if you try to play something newer/unsupported by the iGPU, it will fall back to the CPU to decode the video, so the lower computational power is the limiting factor. If you compare a current atom/celeron processor w/ integrated GPU, the cpu won't even be as powerful as the older 3570K but the iGPU will support the latest codecs. Playing 4k on a 1080p screen used 60% cpu when I was trying it out. The iGPU in the a 3570K is too old to support any of the common 4k codecs used today, so it falls on the cpu to decode and play the content through software. Video can be decoded by the 4 cores through software decoders (codecs built into VLC) or by the iGPU using it's own built in quicksync decoder (hardware acceleration). It's a 4 core cpu, plus an integrated GPU. If you take an older intel i5 3570K as an example. So my question is, why does the file look so much better on VLC than Plex? Is there any way to fix this? It also was originally a 1080p 8bit file encoded to 1080p 10bit, it's not 4k HDR content originally. I am watching it in "Original Quality", the server is located on my own network so it's not a limitation there either. Now it's important to note, I am NOT transcoding the file in Plex. mkv in VLC and compare a screenshot between the same movie on Plex vs VLC. I assumed it was just some sort of issue with the encode into H265. I noticed in Plex on Windows that some of the videos are looking really washed out and blacks are very grey. So I have just recently setup Tdarr on my unRAID server to encode all my x264 1080p movies into H265, purely for space saving.

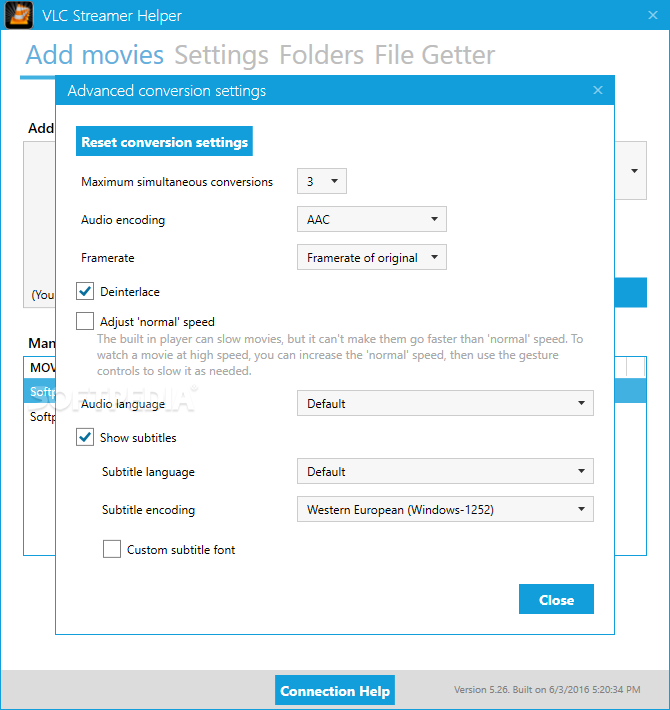

Please go to the relevant subreddits and support forums, for example:Įdit: Turning OFF hardware decoding seems to have solved the "issue". Build help and build shares posts go in their respective megathreads

No referral / affiliate links, personal voting / campaigning / funding, or selling posts Welcome to /r/Plex, a subreddit dedicated to Plex, the media server/client solution for enjoying your media! Plex Community Discord Rules Latest Regular Threads: No Stupid Q&A: Tool Tuesday: Build Help: Share Your Build: Submit Troubleshooting Post Files not showing up correctly?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed